Simplify Your AI Journey

Deliver AI at scale across cloud, data center, edge, and client—that's the power of Intel Inside®.

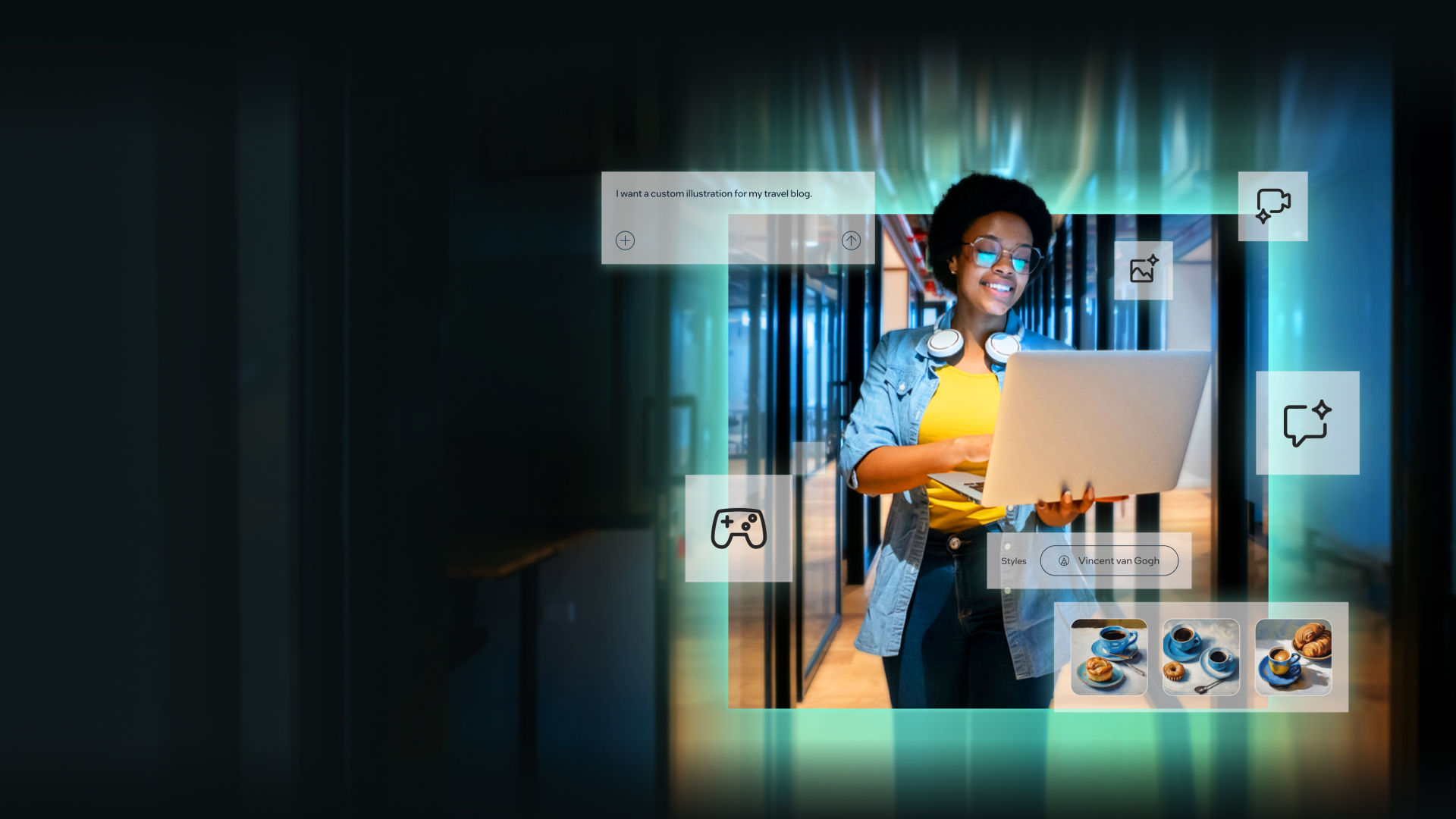

Elevate how you work, create, and play

Experience the potential of intelligent AI assistants, effortless text and image creation, enhanced collaboration effects, and more– right at your fingertips. Redefine your PC experience with heightened personalization and productivity, where every task is now smarter.

Ready to meet the demands of today’s data centers

Trusted performance. Exceptional efficiency. Intel® Xeon® processors are optimized to deliver new levels of performance across the greatest range of workloads, while offering improved efficiency and lower power consumption.

Developer resources

Explore Intel's development resources and search for software and tools by name, type, operating system or topic.

AI

Development tools and resources help you prepare, build, deploy, and scale your AI solutions.

Explore-

Developer Tools icon

Developer Tools

-

Open Source icon

Open Source

-

Gaming icon

Gaming

-

Cloud icon

Cloud

-

AI PC icon

AI PC

-

Edge icon

Edge

-

HPC icon

HPC

-

Explore All icon

Explore All